Artificial Intelligence, or AI, refers to computational techniques that enable systems to perform tasks associated with human judgment, such as pattern recognition, classification, prediction, language processing, anomaly detection, and decision support. In the financial crime environment, AI is increasingly important because firms and authorities are dealing with growing data volumes, faster payment flows, more complex criminal typologies, and stronger expectations around timely, risk-based intervention. FATF has noted that AI and related technologies can help analyze large volumes of data, identify correlations and anomalies, and strengthen AML/CFT efforts when used appropriately and interpreted by subject-matter experts.

From a professional financial crime perspective, AI should not be understood as a single tool or product. It is better viewed as a set of analytical capabilities that can support different elements of the control framework, including customer due diligence, identity verification, transaction monitoring, sanctions screening, alert prioritization, investigation support, and suspicious activity analysis. AI can help firms process structured and unstructured data, identify patterns that traditional rules may miss, and improve the speed and consistency of certain operational tasks. FATF has specifically highlighted potential benefits for onboarding, risk identification, monitoring, communication of suspicious activity, and public-sector oversight.

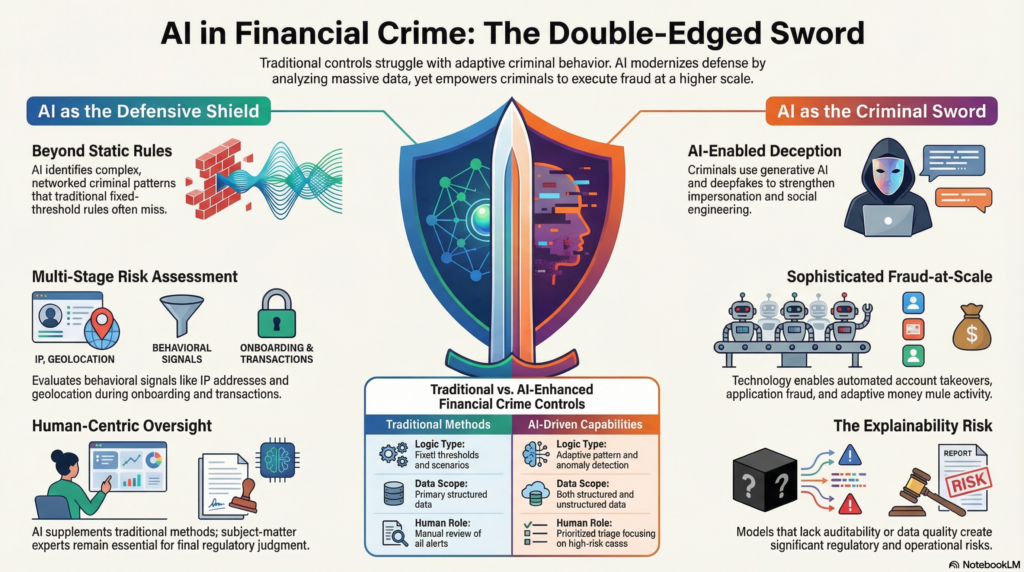

In practical terms, AI is most valuable where financial crime risk is difficult to detect through static rules alone. Traditional controls often depend on fixed thresholds, predefined scenarios, and manual review. Those controls remain important, but they can struggle where criminal behavior is adaptive, networked, or only visible across fragmented data points. AI can help by identifying unusual behavior, linking related entities, detecting anomalous transaction flows, and prioritizing higher-risk cases for human review. FATF’s more recent guidance also points to AI and machine learning being used to assess transaction risk based on signals such as device usage, IP address, geolocation, and behavioral characteristics.

This makes AI relevant across multiple financial crime typologies. In AML, it can support the identification of suspicious transactional behavior and network relationships. In fraud, it can help identify account takeover, application fraud, money mule activity, and payment anomalies. In identity-related risk, it can strengthen remote identity checks and flag inconsistencies across customer attributes and digital behavior. FinCEN has separately emphasized that criminals exploit weaknesses in identity processes at account opening, account access, and when transacting, which is exactly the kind of cross-stage risk pattern AI is often used to assess.

Watch on YouTube: Artificial Intelligence (AI)

AI also matters because the threat environment itself is becoming more AI-enabled. The FCA has warned that AI may enable more sophisticated forms of financial crime, fraud, and manipulation, and FinCEN has issued an alert on fraud schemes involving deepfake media created with generative AI. This means AI in financial crime is not only a defensive capability; it is also part of the threat landscape. Firms must therefore evaluate AI from both perspectives: how it can improve detection and efficiency, and how criminals may use it to strengthen impersonation, social engineering, fraud-at-scale, or evasion techniques.

A mature institution does not treat AI as a replacement for compliance judgment. FATF explicitly notes that technological solutions should supplement traditional methods and should be interpreted by subject-matter experts. That principle is critical. AI can identify patterns, rank risk, summarize information, or support investigative workflows, but it does not remove the need for accountability, escalation standards, legal interpretation, or defensible decision-making. In the financial crime environment, the final question is rarely just whether a pattern is statistically unusual. It is whether that pattern is suspicious in regulatory and operational terms, and that still requires human judgment, governance, and evidence.

Governance is therefore central to the credible use of AI. The FCA’s current approach stresses safe and responsible AI adoption in UK financial markets and explains that existing regulatory frameworks remain relevant to AI use. In practice, this means firms need clear ownership, documented use cases, model governance, testing, validation, performance monitoring, explainability where appropriate, and controls around bias, data quality, and operational resilience. AI that improves efficiency but cannot be understood, challenged, or audited can create as much regulatory risk as it removes. FCA materials continue to frame AI through the lens of responsible adoption rather than unrestricted experimentation.

Data quality is another decisive factor. AI systems are only as reliable as the data, labels, and operational context supporting them. In financial crime settings, poor customer data, fragmented entity records, weak alert outcomes, or incomplete case histories can materially reduce model effectiveness. This is especially important because AI often operates on signals drawn from multiple systems and channels. If those signals are inconsistent or poorly governed, the model may appear sophisticated while producing weak outcomes. FATF’s broader technology guidance repeatedly links the benefits of new technology to the quality of data collection, interpretation, and implementation.

There is also an important distinction between efficiency gains and effectiveness gains. AI may help reduce manual review effort, improve triage, or summarize investigative material more quickly, but those benefits do not automatically mean the institution is identifying more real financial crime. A professional financial crime framework should test whether AI is actually improving detection quality, reducing false positives responsibly, surfacing higher-value cases, or enabling faster intervention in genuinely suspicious situations. The FCA’s recent work on smarter regulation and AI use also keeps people at the heart of decision-making, which aligns with the idea that AI should improve human performance, not obscure responsibility.

Ultimately, AI is significant in the financial crime environment because it can help institutions and authorities process complexity that is increasingly difficult to manage through static controls and manual review alone. It has the potential to improve risk identification, monitoring, investigations, and operational efficiency across AML, fraud, and wider financial crime functions. But its value depends on disciplined implementation, strong data, clear governance, and informed human oversight. At the same time, firms must recognize that criminals are also using AI to strengthen deception, impersonation, and fraud. For that reason, AI should be treated neither as a miracle solution nor as a purely technical trend, but as a strategic capability and risk factor within the broader financial crime control environment.